This loss metric creates a criterion that measures the BCE between the target and the output. Mean-Squared Error using PyTorch target = torch.randn(3, 4) Which makes an evaluation with different units not at all justified. MSE loss function is generally used when larger errors are well-noted, But there are some cons like it also squares up the units of data. Like, Mean absolute error(MAE), Mean squared error(MSE) sums the squared paired differences between ground truth and prediction divided by the number of such pairs. Print ("MAE error is: " + str(mae_value)) With PyTorch module(nn.L1Loss) import torch

# Defining Mean Absolute Error loss functionĪbsolute_differences = np.absolute(differences) Algorithmic way of find loss Function without PyTorch module import numpy as np Let’s see how to calculate it without using the PyTorch module. Mean Absolute Error(MAE) measures the numerical distance between predicted and true value by subtracting and then dividing it by the total number of data points. Read more about torch.nn here Jump straight to the Jupyter Notebook here 1. It helps us in creating and training the neural network. Torch is a Tensor library like NumPy, with strong GPU support, Torch.nn is a package inside the PyTorch library. To run PyTorch locally into your machine you can download PyTorch from here according to your build: You can try the tutorial below in Google Colab, it comes with a preinstalled major data science package, including PyTorch. An objective function is either a loss function or its negative (in specific domains, variously called a reward function, a profit function, a utility function, a fitness function, etc.), in which case it is to be maximized.

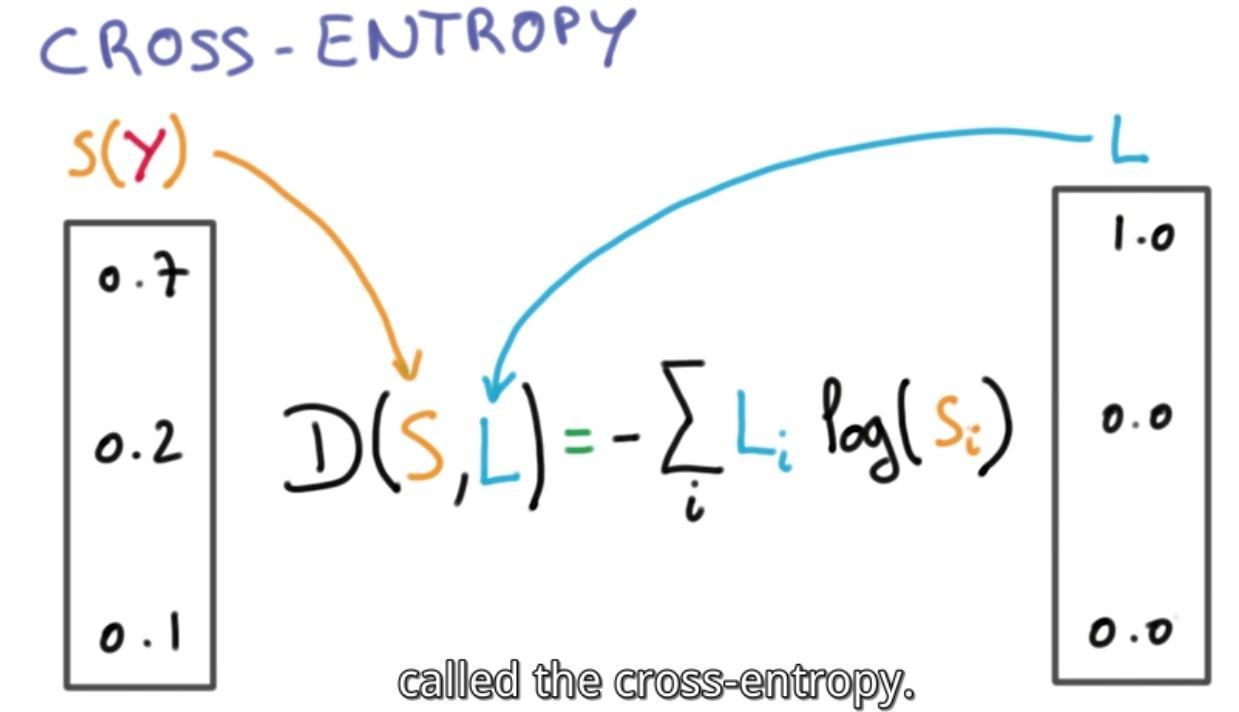

An optimization problem seeks to minimize a loss function. Loss function or cost function is a function that maps an event or values of one or more variables onto a real number intuitively representing some “cost” associated with the event. Kullback-Leibler divergence(nn.KLDivLoss) Triplet Margin Loss Function(nn.TripletMarginLoss) Margin Ranking Loss (nn.MarginRankingLoss) 8 Hinge Embedding Loss(nn.HingeEmbeddingLoss).Binary Cross Entropy(BCELoss) using PyTorch.Using Binary Cross Entropy loss function without Module.Algorithmic way of find loss Function without PyTorch module.Jump straight to the Jupyter Notebook here.

Now According to different problems like regression or classification we have different kinds of loss functions, PyTorch provides almost 19 different loss functions. Today we will be discussing the PyTorch all major Loss functions that are used extensively in various avenues of Machine learning tasks with implementation in python code inside jupyter notebook. Earlier we used the loss functions algorithms manually and wrote them according to our problem but now libraries like PyTorch have made it easy for users to simply call the loss function by one line of code. Loss functions are the mistakes done by machines if the prediction of the machine learning algorithm is further from the ground truth that means the Loss function is big, and now machines can improve their outputs by decreasing that loss function. Have you ever wondered how we humans evolved so much? – because we learn from our mistakes and try to continuously improve ourselves on the basis of those mistakes now the same case is with machines, just like humans machines can also tend to learn from their mistakes but how? – In neural networks & AI, we always give freedom to algorithms to find the best prediction but one can not improve without comparing it with its previous mistakes, hence comes the Loss function in the picture.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed